Recently, I am migrating my personal cloud infrastructure including some VMs and databases. For a better understanding how much work load is on each machine and if databases or nginx under high pressure. I choose to use Vector to collect metrics and Grafana products to save and visualize logs and metrics.

Vector

Vector is a high-performance observability data pipeline according to its GitHub description. In my understanding it consists of tree parts:

- Sources: Vector supports logs and metrics input, they are called sources. For example, access logs from Nginx, host metrics like CPU and network usage, and simply a text file.

- Transforms: After collected logs and metrics, we could write some scripts with VRL or use built-in rules. We could change hostname tag in this step or change any data.

- Sinks: That’s where our data finally goes! You can select Loki, Prometheus, Kafka and other destinations.

Vector is not the only software could do log forwarding stuff. But I think Vector has some advantages you may like:

- It’s written by Rust, build the binary is simple, and memory safe is out of the box.

- It’s faster in some cases, check their performance test.

- Vector could check if data is received by some sinks. The file offset should not be advanced if data was failed to be delivered to destinations. Vector has a comparison with other similar software products in data correctness.

Drawbacks

Although the Vector is under active maintaining. There are still some bugs and pain points with it.

- Could not support customized Nginx log format well. Seems that Vector uses a built-in expression of log format to parse them. You may need to change some code and build your binary to get customized log format supported.

- Some MongoDB metrics datatype is not correct. That would cause an overflow problem when the number is bigger than the maximum value of a 32-bit integer. I am following this issue and created some PR. After my investigation, MongoDB returns dynamic datatype, if the number overflows the maximum value of a 32-bit integer, returned data in BSON will be a 64-bit integer. So, we’d better change all integer to 64-bit. In MongoDB 4, one field is a float number, but it’s an integer in MongoDB 5. Mapping all correct datatype may be a hard work.

Grafana Cloud

Grafana Cloud consists of the following parts:

- Grafana

- Prometheus

- Loki

- Graphite

- Alerts

- Tempo

I am currently using the free tier service. It’s fairly enough for an individual with few servers and services. Allow me to introduce some services I used:

Loki: It’s basically a log aggregation system without indexing the whole log content. In my practice, Nginx access and error logs will be presented to Loki by Vector. And Loki would use some tags from Vector like hostname, app, forward to index the logs. You may also add more tags by VRL scripts.

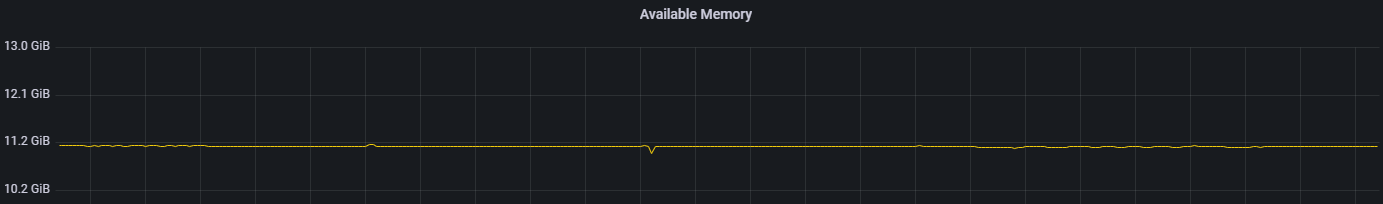

Prometheus: This is a more well-known product by Grafana Labs. We usually use it to monitoring Docker, Kubernetes, and our applications. Vector could send host metrics (CPU, network, file system etc.) and Nginx metrics (active connection number) to it.

Grafana: Grafana allows us to explore and visualize data collected by Prometheus and Loki.

Integration

Configuration of Vector is simple and straight. Just following the official guide to write a config TOML file and pass its path to Vector.

Here is an example of config file:

|

|

We use transforms to change the host tag in host metrics, and send it to Prometheus. Then we could visualize and monitor them on Grafana!

To add more sources like Nginx or log tail is quite similar. That’s all, I’d like to share more things about observability if I have more findings later. Technologies like eBPF now are high-profile these days!